Unsupervised Multimodal Video-to-Video Translation via Self-Supervised Learning

Kangning Liu* Shuhang Gu* Andres Romero Radu Timofte

Abstract

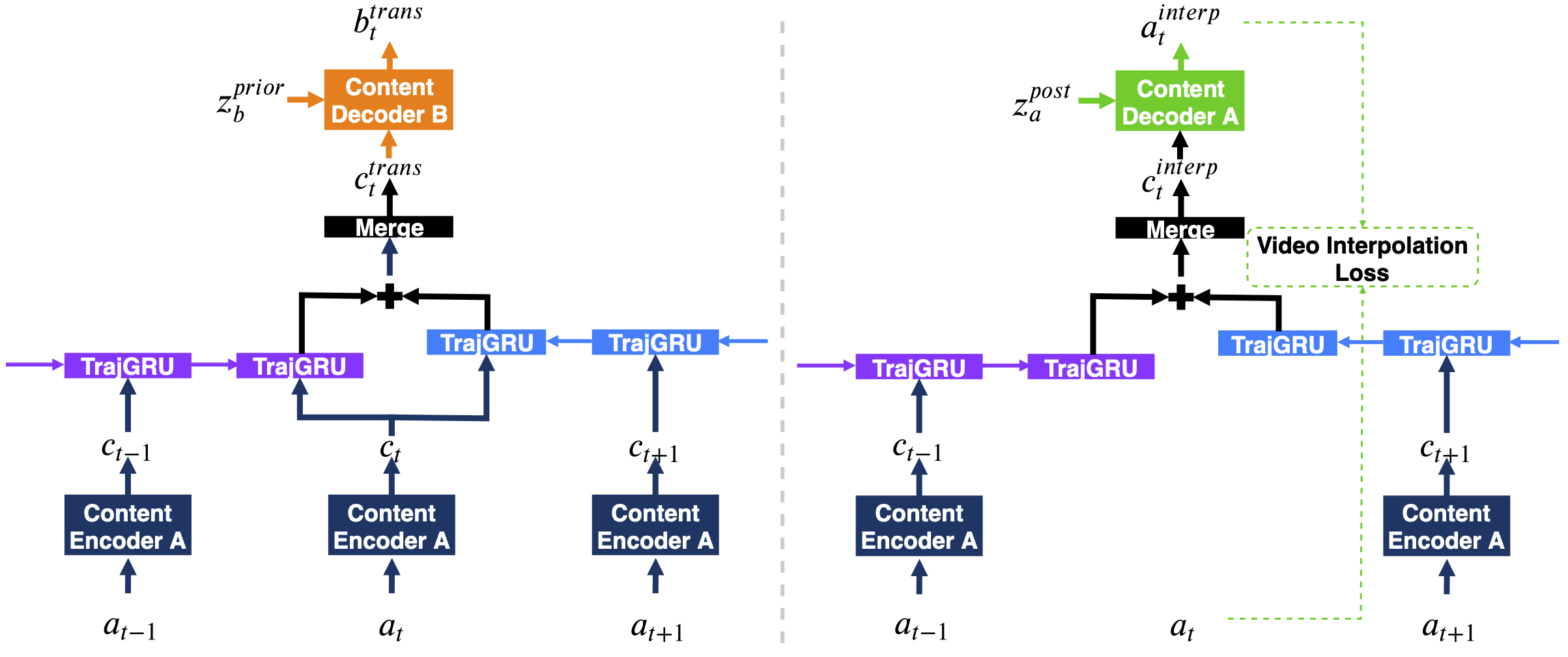

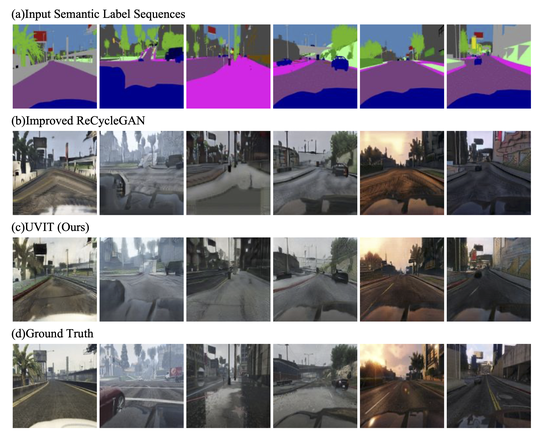

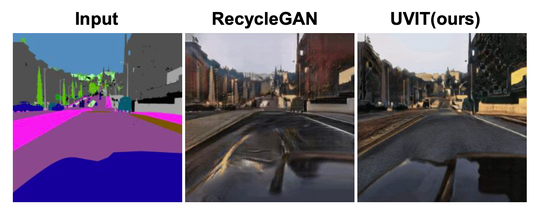

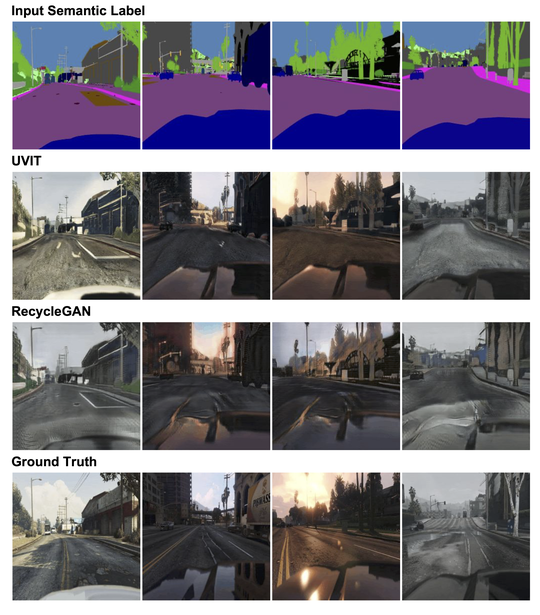

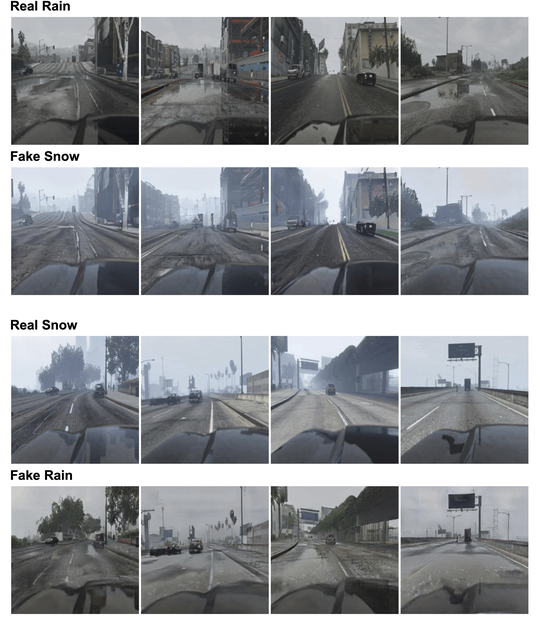

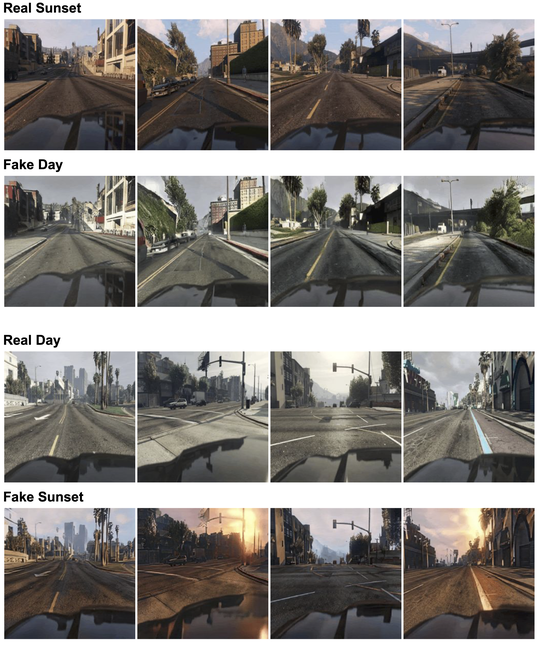

Existing unsupervised video-to-video translation methods fail to produce translated videos which are frame-wise realistic, semantic information preserving and video-level consistent. In this work, we propose a novel unsupervised video-to-video translation model. Our model decomposes the style and the content, uses the specialized encoder-decoder structure and propagates the inter-frame information through bidirectional recurrent neural network (RNN) units. The style-content decomposition mechanism enables us to achieve long-term style-consistent video translation results as well as provides us with a good interface for modality flexible translation. In addition, by changing the input frames and style codes incorporated in our translation, we propose a video interpolation loss, which captures temporal information within the sequence to train our building blocks in a self-supervised manner. Our model can produce photo-realistic, spatio-temporal consistent translated videos in a multimodal way. Subjective and objective experimental results validate the superiority of our model over existing methods.

Citing

@misc{2004.06502, Author = {Kangning Liu and Shuhang Gu and Andres Romero and Radu Timofte}, Title = {Unsupervised Multimodal Video-to-Video Translation via Self-Supervised Learning}, Year = {2020}, Eprint = {arXiv:2004.06502}, }

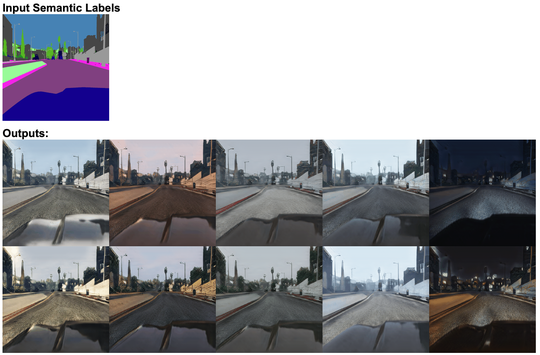

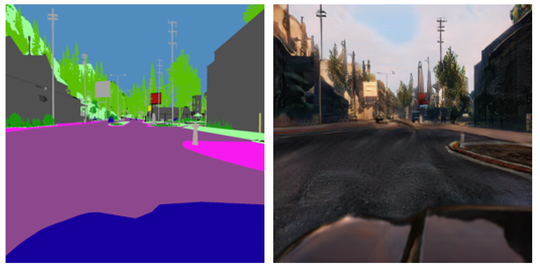

UVIT: Multi-subdomain & Multimodality

UVIT: Overview

UVIT: video translation and video interpolation